Let’s Dive Into Data

Creating an Artificial Intelligence Platform: Part 2

In our first instalment looking at how you create an Artificial Platform we started with the very first step – identify the problem you’re looking to resolve. With this step done you can now start to think about what data exists that is relevant to the issue at hand. Before we dive into data, let’s pause to cover some basics first.

Data can come in two guises; structured and unstructured. We’ve mentioned these two formats in other posts previously, but here’s a quick summary:

- Structured data is information which follows a pre-determined format to make it straightforward to collate, store, search and compare.

- Unstructured data is information that doesn’t conform to structured data requirements, such as emails, report documents, or digital media.

(We’ll have an blog post about this in more detail soon)

In the previous blog on this topic we mentioned using AI to help reduce the risk of sunburn. This will have lots of structured data from meteorological sources covering the amount of sunlight and related levels during set periods – day, week, month and year. There’s also unstructured data in the form of images of skin exposed to the sun, showing different stages and burn severity. So, there’s a couple of practical examples of the two types of data.

A quick aside here: As unstructured data doesn’t conform to defined rules, it’s obviously a lot more difficult to analyse than its structured counterpart. This was for a long time a big hurdle for Artificial Intelligence to get past, and AI took a big leap forwards when unstructured data could be analysed.

Keep it Clean

So, you dive into data, throw it in the magical AI machine and go get a coffee, right?

Wrong. First, make sure your data is clean. This part of the second step is absolutely crucial to the success of your AI project. Remember the old saying Garbage In, Garbage Out? Well, it fits perfectly here. If you use bad data in AI to analyse and predict, you just get a bad result.

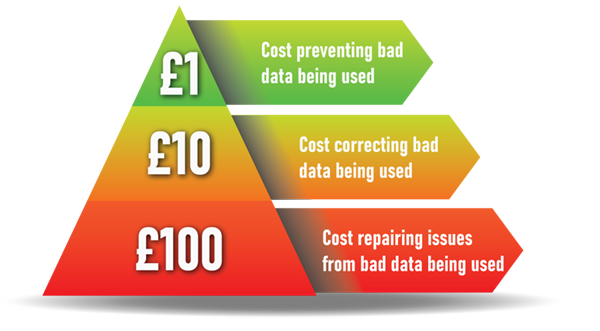

This brings up a point we’ve mentioned previously; it’s vital your staff use applications and input data correctly. The less errors created at source, the less time it takes to correct them, and the less risk of major mistakes happening further down the line. We call this the 1-10-100 rule – where sorting the issue out at the beginning avoids a far larger cost later. And with data, this exponential growth in time, resource and cash being spent is very accurate.

So, check your data and get it spotless. If you don’t clean it, your data will cause more and more problems further down the line. In reality nearly all data needs some work before it can be used. Even the most diligent inputting will contain a percentage of minor errors, and there can be times when the data has to be modified to be acceptable for use. The good news is there are numerous tools which automate a lot of the work involved. There’s even some using Artificial Intelligence – is there nothing that AI can’t do?

In the next step in our little series looking at how you create an Artificial Intelligence platform, we’ll move on to a brief look at algorithms and the types you can use depending on what you’re trying to achieve.