When we discuss our work we often get a reply that people feel AI only works with big data. So we thought we would write a short article to look at this.

Whenever the topic of data analytics crops up it is invariably tied to the broader technology term of ‘big data’. This huge amount of information that flows into, and is created by, organisations every day can hold answers to problems both mundane and critical. But this data deluge comes in different formats, and can be structured or unstructured depending on source. Gaining insight into this data, be it structured or unstructured, can potentially improve a business. And with machine learning / AI comes the ability to predict outcomes, improving confidence in what the data is trying to tell you.

Perception Vs. Reality

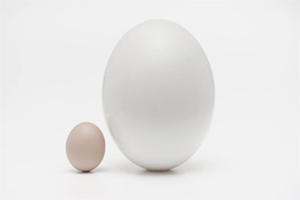

So we know that data science can help shape many facets of our world. So is AI limited to what it will handle, and therefore only work with big data? The problem here is how AI is perceived to be intrinsically linked to Big Data. The view is that you need to have large amounts of information and complex platforms to handle it. This view can only be reinforced when you see the the likes of IBM, Oracle, HP, Teradata, SAP etc are heavily promoting their services in this area. It creates a perception that data science is limited to Big Data – and that’s for the Big Boys.

That can be off-putting to smaller organisations and the ISV sector. There is an assumption they don’t create or store anywhere near enough data to make deploying ML / AI feasible or cost-effective. Their needs don’t require long-term consultancy contracts and their data doesn’t fill a server farm. The goals they’re trying to reach are more grounded and relatable to their customers.

The reality is very different. ML/AI can be hugely cost-effective for smaller organisations – and doesn’t need to carry a hefty price tag or lengthy timescales. Usually the need for using ML/AI is more focused to predict linear outcomes. That means there is not necessarily a need for huge tranches of data.

AI Isn’t Expensive

From the perspective of software houses, expanding existing applications to include ML/AI capabilities can be prohibitive. Development requires specific skills beyond coding and the resources required can be an unwelcome pull away from existing product roadmaps. The idea of connecting up to an existing platform via the cloud is tried and tested for other solutions, but these again may have a high cost attached to them. This can be either through setup or ongoing subscription fees – or even demand complete re-writes to conform to proprietory technology. The new area of robust ML/AI platforms, as typified by ourselves, overcome this through use of open source code. It’s capable of handling data regardless of source and format. This nimbler, open approach makes the use of ML/AI much more straightforward, crunching development time and providing a service which can deliver fast, practical benefits to users.